Last month, a security firm called RedAccess pointed a scanner at the open web and found 380,000 exposed apps built with AI coding tools. Around 5,000 of those leaked sensitive corporate data... patient records, financial files, API keys sitting in the open for anyone with a browser to grab. The story made VentureBeat and a few security blogs, then disappeared under the next AI funding headline.

I read it twice. Then I sat back, because I have seen this movie before.

What vibe-coding is, and why your team is doing it right now

If you have been heads-down on a real product for the last six months, here is the catch-up. Vibe-coding is what people call it when you describe what you want in plain English and an AI assistant writes the code. No syntax, no library lookup, no Stack Overflow rabbit hole. You vibe, the model builds.

It is fast. Frighteningly fast. A non-developer ships a working web app in an afternoon. A junior engineer clears a sprint of tickets in a morning. According to Diffian's 2026 security report, nearly half of all new code pushed to GitHub is now AI-generated, and the number is projected to hit 60% by the end of this year.

Your team is doing this. Right now. With or without your permission. Marketing has spun up a survey tool. Finance has a reporting dashboard nobody else knows about. The customer success lead built her own ticketing system over a long weekend. None of it went through code review. None of it went through security. And every bit of it is in production touching real customer data.

I have lived this exact story before

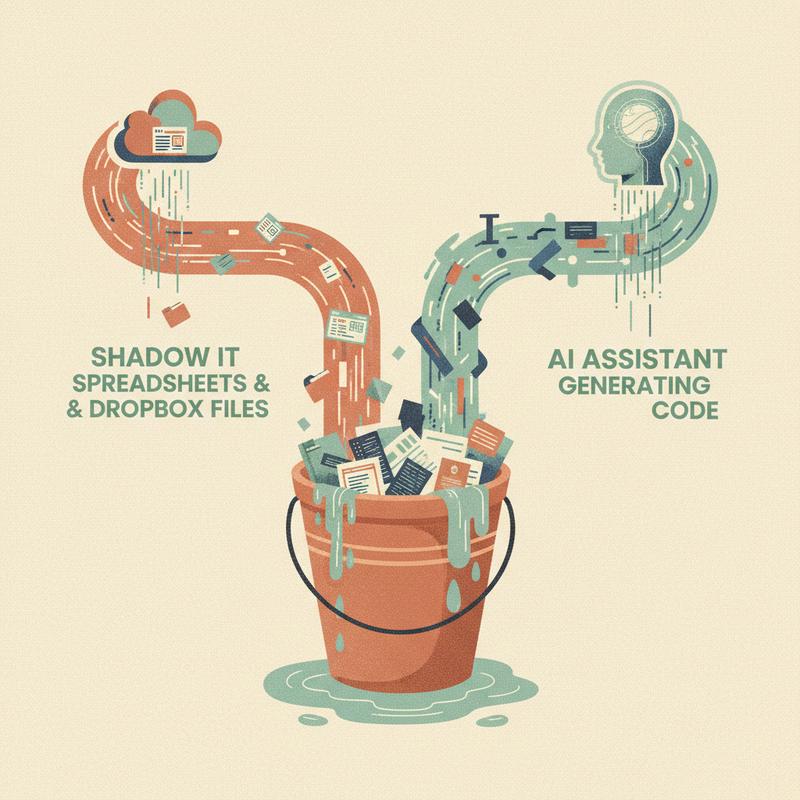

Back in the late 2000s and early 2010s, we had a name for the same problem. We called it shadow IT.

Marketing bought Dropbox. Sales adopted Salesforce without telling IT. Operations ran the whole quarter through a spreadsheet on someone's personal Google Drive. The IT team... my IT team in a few cases... had no idea what software was running the business until something broke or somebody left and we lost the password.

We dealt with it by pretending we were able to ban it. We sent emails. We blocked URLs. We wrote acceptable-use policies nobody read. None of it worked, because the people doing the shadow work were not malicious. They were trying to get their jobs done with tools, while IT made them fill out a six-week approval form to buy a $10 SaaS subscription.

Eventually we figured out the answer was not banning. It was visibility plus governance plus a culture which did not punish people for trying to be productive. Cloud Access Security Brokers showed us what was in use. Vendor reviews caught the worst data risks. And IT departments... the smart ones... stopped saying no and started saying "let me help you do this safely."

CyberAdvisors wrote up the parallel recently. Their stat is the one which should sting: 56% of security teams admit to using unapproved AI tools, while only 32% of organizations have formal AI controls in place. We have learned nothing.

The numbers nobody in your leadership meeting wants to read

Here is what makes vibe-coding worse than the Dropbox era. When marketing put a customer list in Dropbox, the risk was Dropbox getting breached. The risk was on the vendor.

When your CFO vibe-codes a P&L dashboard, the risk is in the code itself. The model writing the code does not know your security policies. It does not understand your threat model. It picks the first library which compiles and the first auth pattern it saw in training data.

Diffian's report tracked CVEs traced back to AI-generated code through the first quarter of 2026. Six in January. Fifteen in February. Thirty-five in March. Not a trend line. A hockey stick.

The top five problems they found will be familiar to anyone who has ever done a real security review:

- Hardcoded API keys and tokens checked into public repos

- SQL injection and command injection from unvalidated input

- Broken authentication... non-expiring tokens, missing access controls

- Misconfigured infrastructure... permissive CORS, missing security headers, no rate limiting

- APIs returning whole database records instead of filtered responses

Every one of these is a 2008-era mistake. The difference is in 2008, the developer who made it learned from a code review or a breach. In 2026, the model who made it is going to make it again tomorrow on a different project for a different team.

Mark Jones at Diffian put it well in the same report: "The speed of vibe coding is an advantage, but only if your security practices keep pace. Fast development without security review is fast exposure."

What engineering leaders should do this week

If you run engineering, here are five things to do before Friday. None of them are big. All of them matter.

1. Find out who is vibe-coding

You do not know yet. Ask. Not in a punitive way... in a "we are figuring out our AI tooling strategy and I want to understand current usage" way. Run a five-minute survey. The answers will surprise you.

2. Get a list of every AI-built app touching customer data

If marketing built a survey tool, what survey is it running, where do the responses go, and who has access? If finance has a dashboard, what database is it pulling from? You do not need to shut anything down. You need to know it exists.

3. Mandate a security scan, not a code review

Code review is the wrong tool here. Most leaders do not have the bandwidth to review every AI-generated PR, and frankly the model will fix anything you flag faster than you flag it. What you need is automated... a secret scanner, an SCA tool for vulnerable dependencies, basic SAST. Hook it into the deploy pipeline. Make it a gate, not a suggestion.

4. Write a one-page AI development policy

One page. No legalese. It should say what tools are approved, what data is allowed into them, who reviews the output before production, and who to call when something breaks. The 1990s wrote acceptable-use policies of forty pages and useless. Do not repeat the mistake.

5. Find your champions

Somebody on your team is already using AI tools well. They are writing tests for the AI output. They are reviewing dependencies. They are scrubbing prompts of sensitive data. Promote those habits into the team norm. The other path... waiting until your CISO calls about a breach... is more expensive than you want to find out.

The bigger lesson

The thing I keep coming back to is we already know how to handle this. Shadow IT taught us the playbook... visibility first, governance second, culture last. The companies winning the cloud era were the ones who stopped treating their employees like the problem.

The companies losing the AI era are doing the same dumb thing... blocking ChatGPT at the firewall, telling engineers they are not allowed to use Copilot, pretending they wall off a tool now bundled into the IDE and the operating system. The approach failed in 2012 and it is failing now.

Speed without governance is debt. Always has been. The 1990s called it technical debt. The 2010s called it data debt. In 2026, we have AI-generated security debt sitting in production, accumulating interest, waiting for the moment a security researcher with a scanner notices.

You have a window... perhaps twelve months, perhaps less... before someone in your industry has the breach defining the category. The leaders who use this window well treat AI tooling like grown-ups. Visibility, governance, culture. In the order I listed them.

How much of your codebase right now was written by something which has never met your security team?