There's a study you need to read. Not because it's comfortable. Because it should change how you're making decisions right now.

In July 2025, METR, a non-profit AI safety research organisation, ran a randomised controlled trial with 16 experienced open-source developers. Not beginners. People working in their own mature codebases, averaging five years on each project. The researchers gave half of them AI tools, primarily Cursor Pro with Claude 3.5 and 3.7, and measured what happened.

The developers using AI took 19% longer to complete their tasks.

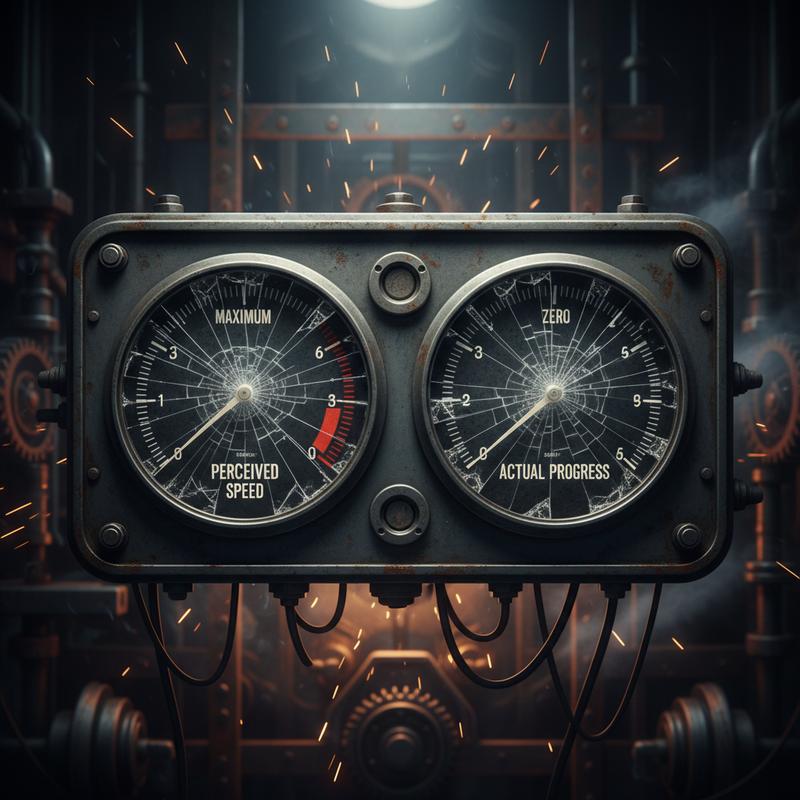

Here's what should keep you up at night: those same developers believed they were working 20% faster.

A 40-percentage-point gap between perception and reality. Not a rounding error. A measurement crisis.

How We Ended Up Here

The AI productivity narrative didn't come from nowhere. In 2023, Microsoft Research published results from a controlled experiment with GitHub Copilot. Developers completed tasks 55.8% faster with the AI pair programmer.

The number travelled fast. Across blog posts, boardroom decks, LinkedIn feeds. It became the figure justifying six-figure AI tool subscriptions. "55% faster." Who wouldn't sign off on it?

What didn't travel as fast was the fine print. The Microsoft study asked developers to implement an HTTP server in JavaScript, from scratch, in isolation. No existing codebase. No accumulated technical debt. No legacy code to understand before touching anything. No other developers whose work needed respecting.

Not what software development looks like. More like a coding challenge. The equivalent of measuring how fast a chef cooks when you hand them a single perfect ingredient and ask them to do one thing with it, then claiming you've measured restaurant kitchen throughput.

Real software development involves reading code you didn't write. Understanding systems grown over years. Debugging errors introduced by AI-generated code which looked right but wasn't. Reviewing pull requests filled with AI output where your job is now to figure out what the AI did, not simply confirm something happened.

None of it shows up in a controlled lab task.

The Measurement Trap

So why doesn't anyone notice? Because the metrics most engineering teams track don't detect it.

Lines of code written. Number of commits. Pull requests merged. Tickets closed. AI tools push all of those numbers up. More commits? AI delivers. More lines of code? No problem. More PRs? Easily done.

Meanwhile the real work, delivering reliable and maintainable features at a sustainable pace, gets harder to see. Code review cycles lengthen because AI-generated code requires more scrutiny. Bug rates climb because the AI made plausible-looking but wrong decisions at the edges. Senior engineers spend more time untangling AI output than they'd have spent writing the code themselves.

According to Forbes Research data, 39% of C-suite executives cite measurement challenges as the key obstacle to quantifying AI's business impact. More than a third of the people who approved these tools cannot tell you whether they're working.

And according to analysis by BBN Times, 73% of companies are measuring AI ROI incorrectly.

Billion-dollar decisions based on metrics already questionable before AI arrived. AI made them worse.

The Feeling Is the Problem

The METR finding, developers felt faster even while being slower, is the most important part of this story. It explains why the measurement problem persists.

AI tools create a sensation of momentum. Code appears. Suggestions flow. The cursor moves. You feel productive in a way staring at a blank screen never achieves. The feeling is real. The speed is not.

The same trap catches leaders who measure activity instead of outcomes. The manager scheduling twelve meetings a week and calling it leadership. The executive generating slide decks at volume and calling it strategy. The engineer closing twenty tickets and shipping nothing reliable in production.

Speed of output is not quality of outcome. We knew this before AI. AI is making it more obvious, and more expensive to ignore.

What to Measure Instead

Outcome-based measurement is the answer. Not what your team produced, but what it delivered.

Cycle time: How long does a feature take from first commit to production? AI tools should shrink this if they're helping. If cycle time isn't falling, speed gains are happening somewhere immaterial.

Deployment frequency: Are you shipping more often? More reliable code reaching users faster is a genuine signal.

Change failure rate: Of the things you shipped, how many caused incidents or required rollbacks? AI-generated code breaking production is negative productivity.

Mean time to restore: When things break, how quickly do you recover? A team generating more volume with more failures and slower recovery isn't gaining anything.

These are the DORA metrics, and they've existed for years. AI makes it more urgent to use them. Without outcome metrics, the illusion of productivity becomes expensive to maintain.

The Question Worth Asking

If you're a CTO, an engineering manager, or anyone who approved an AI tooling budget in the last two years, the METR study is pointing at a question you need to answer honestly:

What would you see if you measured what your team is delivering, not what it's generating?

Not commits per week. Not lines of code. Not pull requests merged. What reached users, worked reliably, and moved the business?

If you haven't stopped to ask the question since rolling out AI tools, you're making the same mistake as the developers in the METR study. You're feeling fast. You're not necessarily getting anywhere.

The measurement problem in AI productivity is solvable. It's the same problem we've always had with measuring knowledge work. AI stripped away the excuses for ignoring it.

Fix what you measure and you'll know what you're getting. Until then, you're flying by feel in the dark, and feeling confident about it.