Ninety-five percent of generative AI pilots at companies are failing.

Not because the models are bad. Not because the integrations are hard. Because of the people running them.

The number comes from Fortune, via research cited in Harvard Business Review, and it stings. The tech industry has collectively spent hundreds of billions of dollars on AI infrastructure, tooling, and model training. And yet most of it isn't producing results. Organizations are buying the most advanced tools ever created... then watching them gather dust.

The technology is not the problem.

The Numbers Don't Lie

According to the 2026 State of AI Agents Report, when organizations were asked what's blocking them from scaling AI agents, the top three answers were integration challenges (46%), data quality (42%), and change management (39%).

The first two get all the attention. Teams hire more engineers, buy better data pipelines, and invest in architecture reviews. The third one gets a line in the project plan and a two-hour all-hands.

Research from LSE and McKinsey puts a harder number on it: 70% of AI adoption challenges come from people and process issues, not from technology. And yet leaders spend the bulk of their time and budget on the tech side.

A Writer.com survey of enterprise organizations in 2026 found:

- 79% of organizations face challenges adopting AI

- 75% of executives admit their AI strategy is "more for show" than actual guidance

- 54% of C-suite executives say AI adoption is "tearing their company apart"

Tearing it apart. These are billion-dollar companies with access to the best tools available, and over half their executive teams say AI adoption is ripping them in two. What went wrong?

Leaders Are Blaming the Wrong People

McKinsey's research on AI scaling found something uncomfortable: "The biggest barrier to scaling AI is not employees who are ready, but leaders who are not steering fast enough."

Read it again. Employees are ready. Leaders aren't steering.

Most leaders I talk to frame their AI problem as a skills gap, a tech gap, or a budget gap. They look at their teams and see resistance. They run surveys asking "Are you comfortable using AI tools?" and they're surprised when the answers disappoint them.

The real question to ask is: have you created the conditions for your team to safely experiment, fail, and share what they're learning?

In most organizations, the answer is no. And the data backs it up. The same Writer.com survey found 29% of employees admit to actively sabotaging their company's AI strategy. Among Gen Z workers, it's 44%.

Employees aren't lazy. They're scared. And fear is a leadership problem.

The Shadow AI Epidemic

Here's what's happening in your organization right now, whether you know it or not. Your team is using AI. They've been using it for months. But they're not telling you.

Psychology Today, citing Amy Edmondson's research on team learning, describes this as the "shadow AI problem": employees run their own experiments, get useful results, but never share the learnings because they don't feel safe doing so. Organizational learning stops at the individual level.

Why don't they share? Two reasons, and both trace back to leadership.

First, they're afraid of being seen as the person who's been doing things "wrong" by using an unapproved tool. Second, they're afraid of being seen as the person who's making their own role easier to automate. Those fears don't exist because your team is irrational. They exist because leadership hasn't made it safe to be transparent.

When people don't trust their leaders, they don't trust the tools their leaders are rolling out. Harvard Business Review puts it plainly: "Employees won't trust AI if they don't trust their leaders."

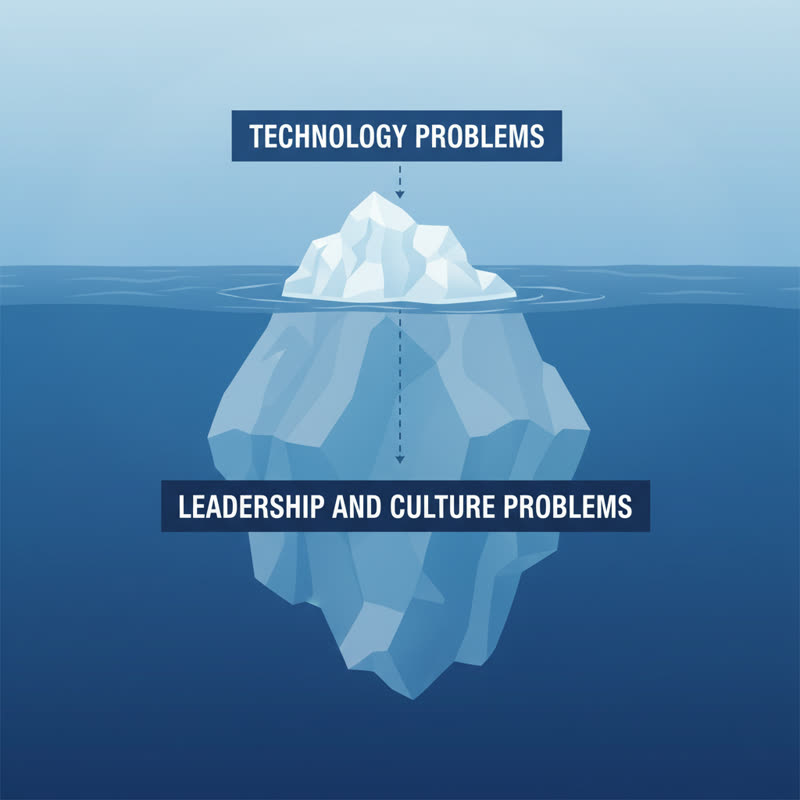

The Iceberg Nobody's Talking About

There's a reason AI rollouts look fine from the top and feel chaotic at team level.

The visible problems get attention: the API integration, the model output quality, the security review. These get assigned to engineers and architects, tracked in Jira, reported to the board.

The invisible problems sit deeper. Leaders who approved the AI budget but haven't touched a single AI tool themselves. Managers who frame AI adoption as a performance pressure rather than a learning opportunity. Organizations where being wrong or slow to adapt gets noticed and punished, so people stay quiet.

Dr. Dorottya Sallai from LSE's Department of Management described it: "AI adoption is a cultural transition," one requiring leaders to overcome "psychological and leadership barriers more than technical ones."

In many organizations, the capability is there. The model exists. The integration works. But nobody's communicated the business case. The "why" is absent. And when people don't understand the why, they write their own story... and it's rarely a good one.

What Good Looks Like

I've spent years working in tech and HR leadership, and I've seen AI rollouts succeed and fail. The difference isn't the tool. It's always the environment the leader creates.

At Step It Up HR, we work on the 3 A's: Awareness, Acceptance, Action. You don't get Action from a team who hasn't worked through Awareness and Acceptance. Forcing an AI rollout on a team who's scared isn't change management. It's coercion. And coercion creates sabotage.

A few things I've seen work:

Make it safe to be a beginner. The fastest way to kill AI adoption is to celebrate the super-users and ignore everyone else. If your AI "champions" get praised in all-hands while slower adopters feel shame, you've built a two-tier culture. Two-tier cultures breed resentment, not adoption.

Show your own failures. If you're in a leadership role and you're not showing your team where AI gave you a rubbish output or where you got the prompt completely wrong, you're missing the most effective culture signal available. Vulnerability from leaders is what makes it safe for everyone else.

Slow down to go faster. McKinsey found effective AI leaders grow revenue 1.5 times faster than their peers. But they didn't get there by rushing. They invested in the culture infrastructure first. Teams running experiments win. Teams mandating adoption lose.

Four Questions to Ask Yourself

Before you buy another AI tool, run another rollout, or write another policy, sit with these:

- Does your team feel safe telling you when an AI tool isn't working?

- Do you know which team members are quietly using AI tools you haven't approved?

- Have you shown your team your own AI learning journey, including the failures?

- When someone raises concerns about AI adoption, do you treat it as a technical problem or a human one?

If you winced at any of those, start there.

Reddit has been full this week of workers sharing their unease about agentic AI doing more of the task layer. The anxiety is real. What your team needs from you isn't a policy. It's a conversation.

The Leadership Variable

The 2026 AI adoption data is unambiguous. Organizations with strong leadership culture around psychological safety, transparent communication, and genuine employee voice are adopting AI faster and getting more out of it. Organizations with mandates, monitoring, and fear are producing shadow AI users and saboteurs.

If your AI rollout is struggling, look at the leadership culture before looking at the tech stack.

What would it look like if people in your organization felt safe enough to tell you the truth about how AI is landing? Start there.