GPT-5.5 dropped on April 23. GPT-5.4 dropped on March 4. Forty-nine days apart. By the time your team finished retraining users on the last one, the floor moved again.

If you are building an AI product right now, this is the question I get asked most. How do you ship something durable when the underlying model gets replaced every six weeks?

Here is my answer. Stop treating the model as the product. The model is a shoe. Shoes wear out.

The cadence is the actual story

It is worth sitting with the numbers for a minute, because most teams I talk to have not internalised them.

According to release tracking data, OpenAI's median gap between frontier model releases has compressed from 170.5 days in 2023 to 84.5 in 2024, then 58 in 2025, and 49 days in 2026 year to date. A 70% compression in three years.

Twelve frontier model updates shipped across the industry in February 2026 alone. Between April 16 and 24 of this year, the leaderboard for the best coding model changed hands three times in five days.

Then GPT-5.5 Instant landed on May 5, replacing the default model in ChatGPT for hundreds of millions of users without any notice to the people who built workflows around the old behaviour.

This is not slowing down. It is the new baseline.

The mistake almost everyone is making

I see the same pattern over and over. A team picks a model, builds a product around its specific quirks, ships, and then... the model changes underneath them.

Suddenly the prompts no longer produce the same output. The tool calls fire differently. The token costs shift. The context window grows but the way long contexts are handled changes. Users hit unexpected behaviour and complain. The team spends two weeks "tuning for the new model" and ships a patch.

Six weeks later it happens again.

If you have a roadmap longer than the gap between model releases, your roadmap is fiction. You are not building a product. You are running a treadmill.

What durable looks like

Here is the shift I keep recommending to founders and engineering leaders. Stop building on model versions. Start building on behaviours.

What does this mean in practice? Three things.

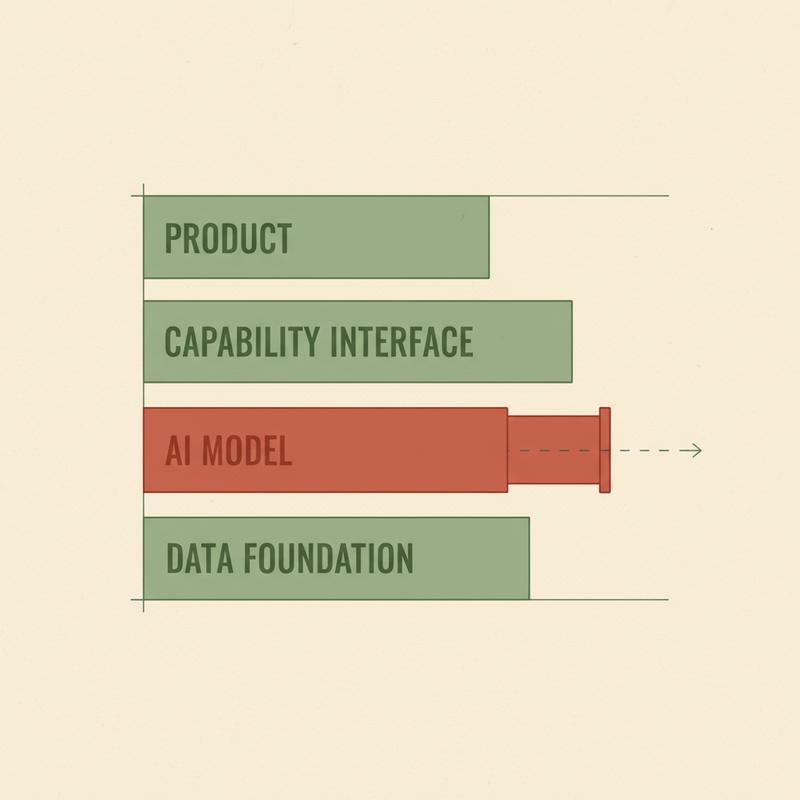

1. Put a capability layer between your product and any specific model

Your product code should never call the OpenAI SDK directly. It should call a capability interface you own. Something like summarise(text, audience) or classify(input, taxonomy). The implementation behind this interface is where the model lives, and nowhere else.

When GPT-5.6 lands in July, you change one file. Your product code does not move.

There are tools doing most of this work for you. LiteLLM is a unified API across 100+ model providers. Multiple frameworks now offer this same pattern as a service. Pick one and use it. Do not roll your own and do not skip this layer because you are in a hurry. Speed without structure bites you in seven weeks.

2. Define your product by user behaviour, not model behaviour

This is the harder shift, and most teams skip it.

Ask the question this way. What does my user need to feel, see, or get done? Not "what does GPT-5.5 do beyond GPT-5.4?" but "what is the human outcome I am responsible for?"

A feedback tool needs to ask the right question at the right time and surface the right reflection. The fact it uses an LLM to do some of the work is a detail. The product is the feedback loop, not the model.

If your product description includes the words "powered by GPT-5.5," your product is in trouble. Users do not buy models. They buy outcomes.

3. Treat model swaps as a config change, not a release

When your AI layer is properly abstracted, swapping models becomes a config change with a regression suite. Not a sprint. Not a rewrite. Not a meeting.

The teams winning right now have an evaluation suite running every prompt template against the new model the day it drops. They know within hours whether the swap is clean or whether they need to adjust. The teams losing right now are still gathering requirements for the swap two months after the new model shipped.

I am not sure about this part: the exact percentage of teams running automated model evaluation suites, but every founder I know who is building seriously on AI either has one or is building one this quarter. If you do not, build one.

The cultural cost nobody talks about

There is a quieter problem here, and it is the one I think matters most.

Your team is exhausted.

Engineers have to relearn the model every six weeks. Product managers have to recheck every assumption. Designers have to revalidate every flow. Customer success has to retrain users who finally got comfortable with the last version.

This is not sustainable. People burn out. They stop trusting the platform. They start hedging. They build defensive code. They add layers of "is this still working" checks slowing everything down.

The teams I see surviving this have done one thing. They have made the abstraction the work. The team's job is not to be on the bleeding edge of every model release. The team's job is to maintain a durable product surface customers trust, while the model underneath gets swapped for free.

This is a leadership decision, not a technical one. It is the difference between a team shipping and a team thrashing.

What I would do if I were starting today

If I were standing up a new AI-native product right now, I would do these five things, in this order.

- Pick the cheapest, fastest model hitting the quality bar for my use case. Not the smartest. The smartest model is overkill for most things and will get replaced anyway.

- Wrap every model call in a capability function I own. Never let model-specific code leak into the product.

- Build a small evaluation suite on day one. Twenty representative prompts, expected output ranges, a green or red signal.

- Write the product description without naming the model. If I cannot, I have not figured out what the product is.

- Set a calendar reminder every six weeks to run the eval against the latest models and see if I should swap.

This is the loop. Unglamorous. Also the only way I have seen anyone build something surviving more than two model releases.

The real moat

The thing I want you to take from this is simple. Models are not your moat. Speed is not your moat anymore either, now the App Store is seeing a flood of new AI-built apps weekly thanks to AI tooling.

Your moat is the product surface your users learn to trust. The interface they get good at. The workflow you own. The data you accumulate about how they work. This survives a model swap.

The frontier labs are sprinting. Let them. Build something indifferent to which one is winning this week.

What is the thing your product would still be useful for if every model on the market got replaced tomorrow morning?

If you cannot answer the question, you have your next month's work.